Salesforce

The Architect’s Survival Guide to AI-Augmented Delivery

What changed at TDX 2026, and what architects need to do about it.

The first time you watch AI rewrite a chunk of your codebase, you feel a small flicker of panic. You asked it to refactor one function. It quietly touched seven. You click through the diff and the floor moves slightly under your feet. You either accept the change because the build still passes, or you start scrolling line by line trying to work out what just happened to the thing you wrote last week.

If you’ve vibe coded for any length of time, you know the feeling.

At TDX 2026 the Architecting AI session put a number on it. One AI cycle, the speakers said, took a codebase from 8,900 lines down to 4,000. Build still ran. Tests still passed. Nothing screamed. The AI’s summary said the change was small. The code said otherwise.

That’s the gap this article is about. Not whether AI is useful in delivery; it obviously is. The question is whether the architect’s role has caught up with what the tooling now does without asking. Below: what changed at TDX, the new disciplines worth adopting on Monday, and the parts of the SDLC where you cannot delegate, no matter how good the model gets.

The job changed. Most architects haven’t.

Two years ago, an architect’s value was visible in what they shipped. Diagrams, schemas, code reviews, the integration patterns that survived audit. The model worked because the architect was the one closest to the build.

The Architecting AI session at TDX put it differently. You’re no longer just a builder. You’re the one guiding the builder. The role inverts. The architect’s value is in what they prevent the AI from getting wrong, not in the volume of what they personally produce.

AI suggests. Architects decide.

This is uncomfortable for two reasons. First, it’s harder to measure. A well-judged “no” doesn’t show up in a sprint report. Second, the AI is fast and convincing. As one of the speakers put it: “It just loves to stroke you. ‘You have a beautiful, complete solution.’ And we’re like... calm down, there’s more to go.”

Architects who stay measured by velocity will lose to AI on velocity, every time. Architects who reposition around the parts of the SDLC the AI cannot judge become more valuable. Those parts: risk, intent, integrity, regulatory posture. Your job now is to stay in command, and to make the case for staying in command.

Two disciplines, two risk profiles

Most teams treat AI as one thing. It isn’t. There are two distinct disciplines, and conflating them is what gets architectures into trouble.

Using AI means consuming AI tools to produce code, designs and tests faster. The risk profile is the architect’s: drift, silent regression, designs that look complete but aren’t.

Building AI means architecting a system that itself reasons, acts, and remembers. The risk profile is the customer’s: unpredictable behaviour, runaway tool calls, audit failure when something goes wrong.

They share a model and not much else. The governance, the controls, the audit trail: different for each.

| Using AI | Building AI | |

|---|---|---|

| Goal | Producing artefacts faster: code, designs, tests | Producing a system that reasons, acts, and remembers |

| Primary risk | Drift between intent and code | Drift between intent and behaviour at run time |

| Where humans stay in command | Design checkpoints, diff review, TRD lock | Tool boundaries, kill switch, observability, blast radius |

| Audit trail you need | Commit-per-cycle, TRD-versioned changes | Decision logs, agent traces, tool invocation history |

The AI-augmented SDLC: where the checkpoints live

Walk it phase by phase. At every boundary, the architect either holds a checkpoint or loses one.

Plan phase: architects belong upfront, not at sign-off.

Plan

Architects belong upfront, not at sign-off. A plan with no architectural input is a plan that becomes expensive to fix at design phase. Lock the scope, the constraints and the non-functionals before any AI cycle starts.

Requirements

AI inflates. One sentence from a stakeholder (“I need a system to track contractor pay”) becomes two pages of requirements, one hundred pages of use cases, and ten thousand lines of code. That’s the balloon problem. The checkpoint is the Technical Requirements Document (TRD): locked and versioned, anchoring every cycle that follows.

Design

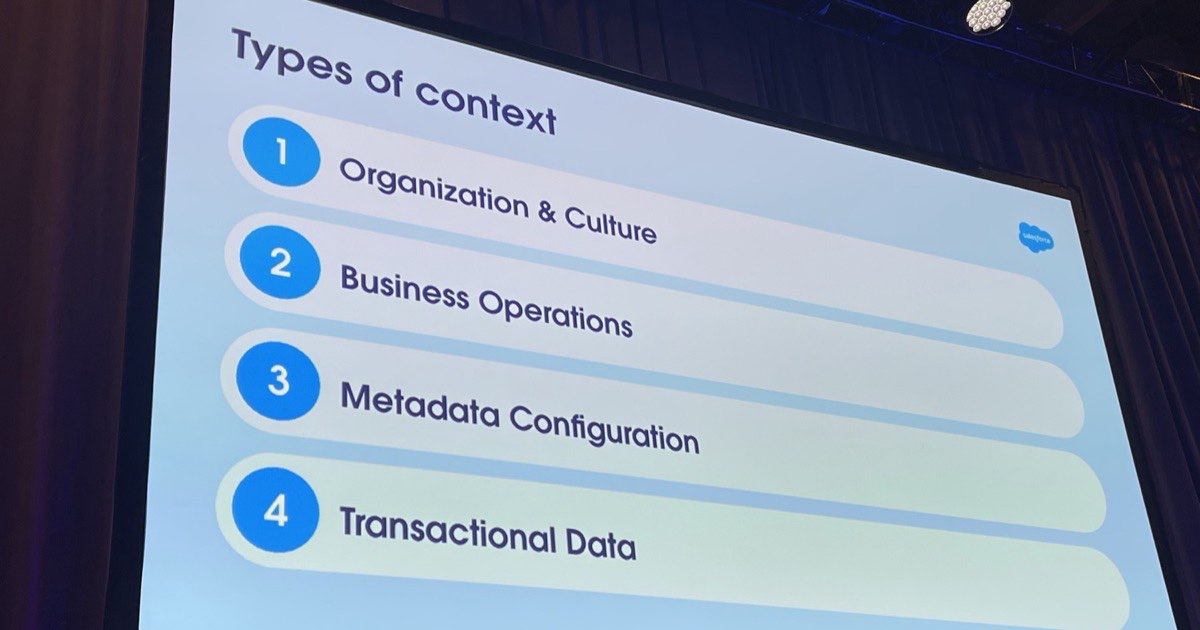

Fast output, weak architecture is the failure mode. AI produces designs that look finished. The diagrams are there, the sequence flows, the schemas. The cross-cutting concerns are not. Identity, observability, blast radius, multi-tenancy, regulatory posture. None of those appear unless you prompt for them. This is where the architect’s special sauce lives.

Build

Vibe development is fine when it’s bounded. The discipline is commit-per-AI-cycle: one feature, one cycle, one commit, diff-reviewed before merge. (More on this below.)

Vibe development is the start, not the strategy.

Test

AI-generated tests prove behaviour, not intent. A passing suite is not a guarantee that the system does the right thing. Humans validate intent. The architect’s job is to define what “right” means before the build, not after the bug.

Deploy

Borrow Google’s playbook from the Agentforce Journey session: shadow first, then supervised, then automated, only at 80–90% accuracy thresholds. Treat rollout as part of the architecture, not the project plan.

A design can look complete and still be architecturally incomplete

This was the most-quoted sentence of the Architecting AI session, and it’s the one to put on the wall.

AI is excellent at producing artefacts that look finished. The diagram has all the boxes. The schema has all the fields. The sequence flow has all the arrows. What it misses is the design behind the design. Where does identity flow? What’s the blast radius if this service fails? Where does observability live? Who owns the data model when two products diverge?

Two parts of an AI-augmented design stay human-only.

Do not trust AI on the data model.

It’s a hard line. Schemas drive everything downstream: refactor cost, regulatory exposure, the integrity of every report a CXO ever sees. AI will produce a schema. Don’t ship it without an architect having designed it.

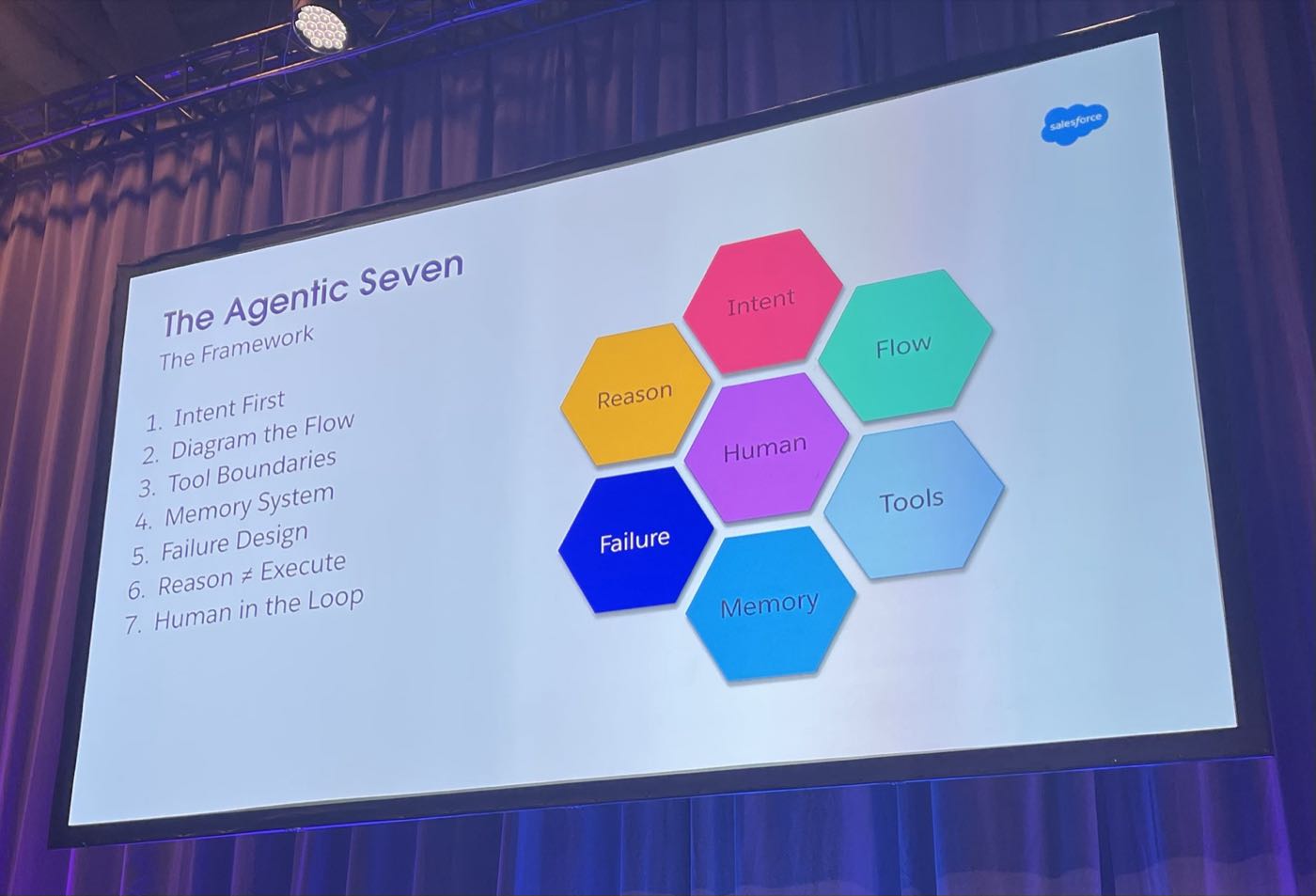

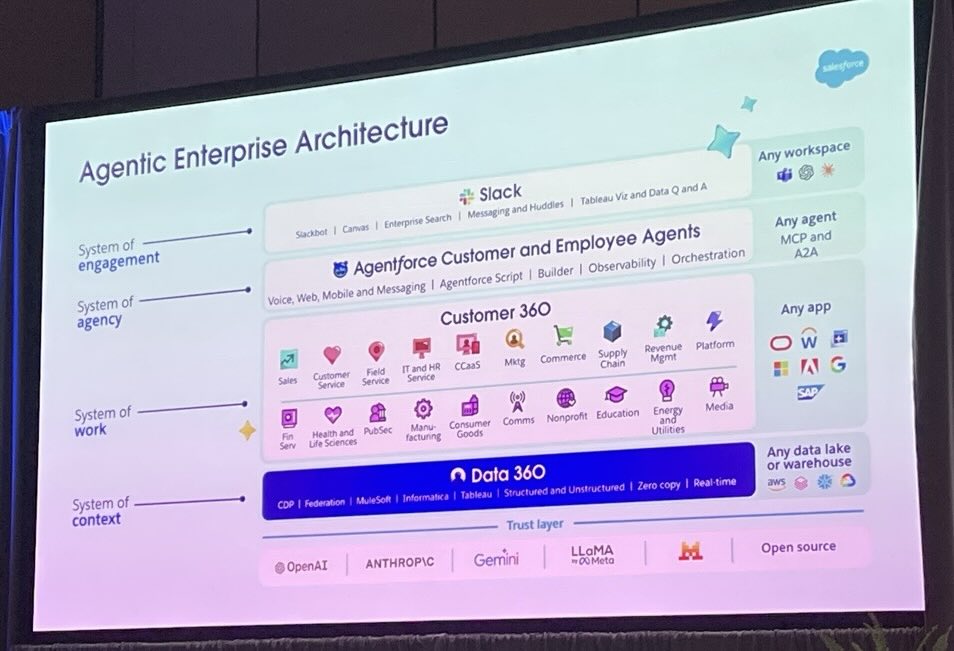

The second part is integration boundaries. The Agentic Architecture session at TDX framed enterprise architecture as urban planning. Without it, you don’t get a city; you get a shanty town. AI will build neighbourhoods enthusiastically. Architects zone the city. Decide where APIs go, where MCP fits, when A2A is appropriate, when bridges make sense. AI does not have the context to answer those questions, won’t, and the cost of getting them wrong is structural.

Enterprise architecture is urban planning. Without it, you get slums.

The architect’s real product is the design behind the design.

Commit-per-AI-cycle: the discipline that saves your codebase

Back to the 8,900-line story. The reason it didn’t scream is that there was no commit boundary between the version the team trusted and the version the AI rewrote. The AI’s summary was reassuring; the diff would have been alarming. Nobody opened the diff.

Commit-per-AI-cycle is the discipline that fixes this. Four rules:

- One feature, one cycle, one commit. If a cycle changes more than one thing, split it.

- Diff-review every cycle. Read the diff, not the AI’s summary of the diff.

- Lock the TRD before each cycle. Reference it explicitly in the prompt. Without it, instructions are slippery; they drift across cycles and you can’t tell what the model is optimising for.

- Roll back without ceremony. A cycle is cheap. Rolling back isn’t failure. It’s how the workflow works.

One Architecting AI quote captures the upside: “I could erase all my code, say ‘build it according to the TRD,’ and it would almost appear correct.” That’s the test. If your TRD is good enough that the codebase could be regenerated from it, the architecture is in shape. If it isn’t, the architecture is hidden in the heads of whoever last committed.

The architect’s survival checklist

Print this. Tape it to your monitor. The disciplines below survived contact with the worst rooms at TDX.

| Discipline | What it looks like | What you protect |

|---|---|---|

| Lock the TRD before generation | TRD signed off and version-controlled before any AI cycle starts | Drift between intent and code |

| Own the data model | Schema designed by humans, reviewed against business capabilities | Refactor cost and regulatory exposure |

| Commit-per-cycle | One scoped change per AI cycle, diff-reviewed before merge | Silent regression |

| Architect at design checkpoint | Cross-cutting concerns reviewed and signed off before build | Architectural incompleteness |

| Verify intent, not just behaviour | Tests prove the system does the right thing, beyond simply running | Confidently wrong outputs |

| Bound the agent's blast radius | Tool list scoped to intent, with a kill switch and a budget cap | Runaway cost and audit failure |

| Observability before deploy | Logs, traces and agent decisions persisted from day one | Debuggability and trust |

| Phase the rollout | Shadow, then supervised, then automated, with explicit accuracy thresholds | Production safety |

What this means for your CTO, CIO or COO

If you’re an architect, you can stop here. The next two paragraphs are the version to forward up the chain.

The shift is this. AI is now a delivery accelerator, but only if the architectural disciplines around it are funded and respected. Cut those, and the productivity gain disappears into rework, audit risk, and the kind of silent regression that doesn’t show up until a regulator or a customer finds it for you. Your architects are not the bottleneck. They are the audit trail.

The control to ask for: every AI cycle reviewed, every architectural decision logged, every rollout phased. The clarity to ask for: a TRD per stream that’s good enough to regenerate the system from. The confidence to ask for: a delivery you’d put in front of a regulator on Monday.

Want a calm review of your AI-augmented delivery?

If your delivery is moving faster than your architecture can defend, that’s the gap we close. Start with a Delivery Control Snapshot: a one-page risk view of your AI-augmented programme that your sponsor can act on this week.

Get in touchFurther reading

Source material and authoritative references for the topics in this article.

- •Salesforce Agentforce platform. The official Agentforce product page, including Agent Script and the Atlas Reasoning Engine.

- •Model Context Protocol (MCP) specification. The open standard behind the integration patterns referenced in the design and build phases.

- •Your Agent Isn’t Broken. Your Architecture Is.. The companion piece on the five failure modes behind production agent disasters.

About the author

You may also like…